Human in the Loop

Human in the Loop

Automation handles the predictable majority of any process. The cases that remain — edge cases, high-stakes decisions, ambiguous inputs, exceptions that fall outside defined rules — are where human judgement is irreplaceable. MelodyArc is built around the idea that standing up human oversight should be straightforward, and removing it should be equally straightforward as AI capability and rules-based automation mature.

Where humans fit

Human involvement in a MelodyArc flow takes two forms:

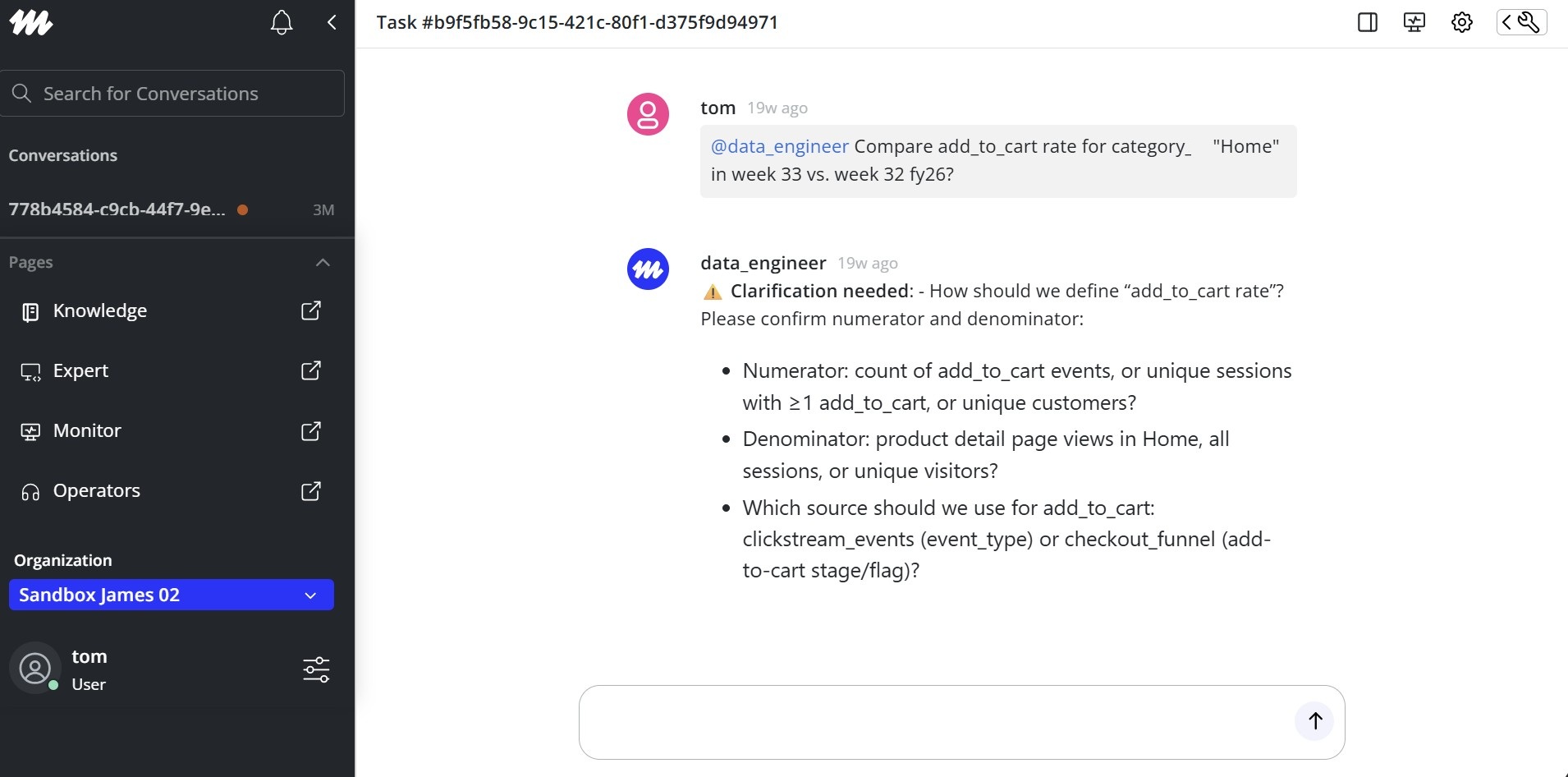

Conversational check-in — an AI Operator sends a message to an associate via the chat interface, waits for a response, and continues the flow based on what the associate says. This is the lightest form of oversight: no structured data, no form submission. The associate reads, replies, and the operator proceeds. The Chat Service and AI Operators handle this natively.

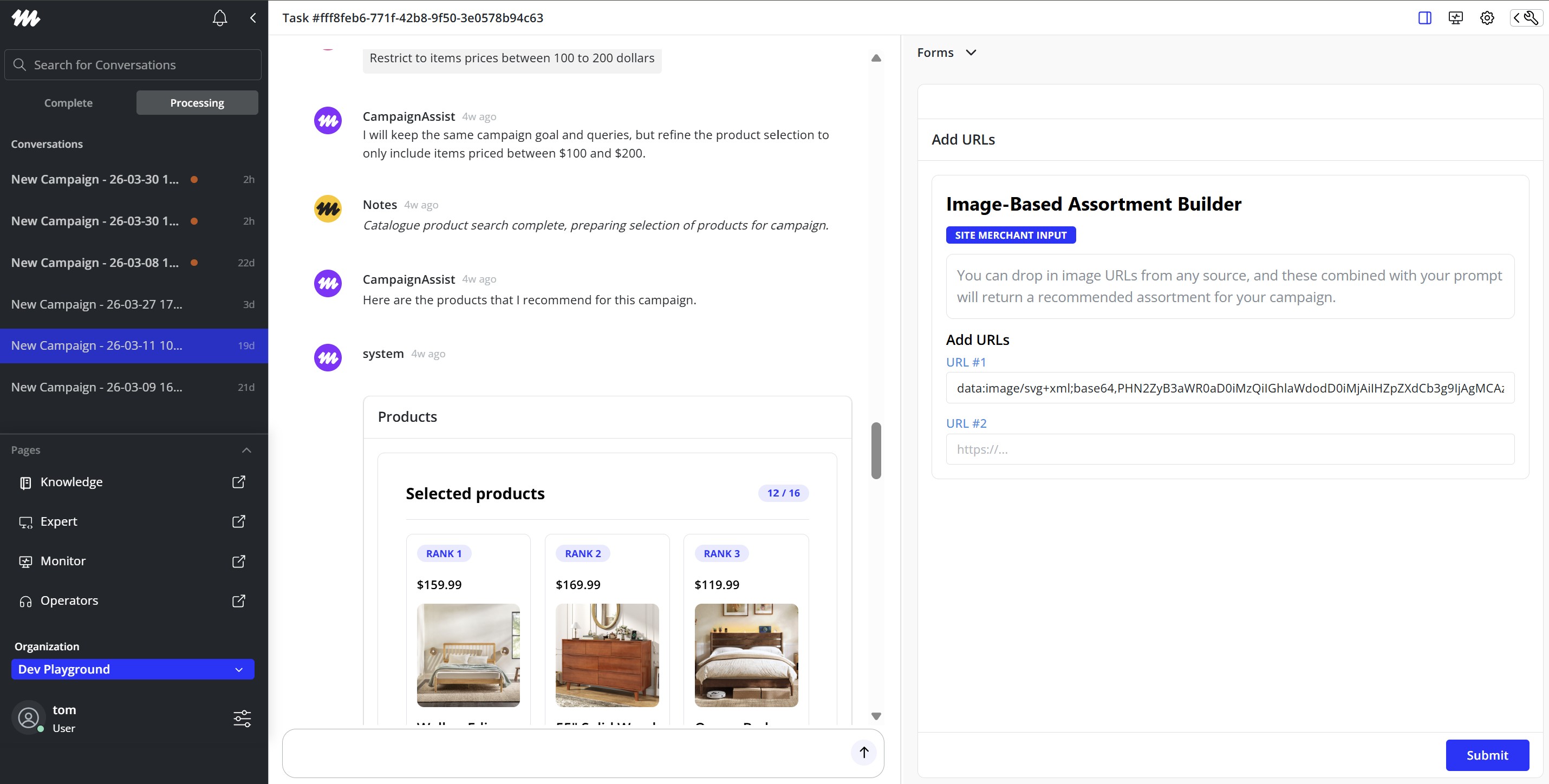

Structured input or approval — an AI Operator surfaces a form to the associate, requiring them to review data, make a selection, or approve an action before the flow continues. This is the right pattern when the decision requires presenting organised information or capturing a specific structured response. Form Builder and Dynamic Forms handle this.

Most production flows combine both: a conversational operator that knows when to hand off to a form for a specific decision, then resumes conversation once the associate has responded.

Human-in-the-loop architectures

The two examples below show how human oversight sits within real MelodyArc automation flows.

Forms: AI created forms that dynamically populate for each step of the process at hand.

Chat: Enterprises can design how much freedom they want humans or AI to use in human-to-AI or AI-to-AI chat interfaces.

Designing oversight as a removable layer

Human-in-the-loop steps work best when they are designed to be conditional from the start — a gate that can be opened or bypassed, not a structural dependency that the rest of the flow is built around.

A few practical principles:

Scope the decision, not the flow. The human step should own a specific decision point — approve, reject, provide missing input — not supervise the whole process. The narrower the scope, the easier it is to automate later.

Write the output to the token the same way automation would. When an associate approves a message or selects an option via a form, that value lands in the data token just as it would if a rule or model had produced it. Downstream points do not need to know whether a human or the platform filled that value.

Gate on confidence, not on category. The threshold for human involvement should be a condition — confidence score below a limit, entity value missing, exception flag set — not a permanent assignment of certain task types to human review. As confidence improves, raise the threshold or remove the gate.

Track bypass rates. If a human consistently approves without changes, the step is a candidate for removal. If they consistently edit, the model or rule producing that output needs work. Neither insight is visible unless the flow is instrumented.

Further reading

| Topic | Where to go |

|---|---|

| Sending a message and awaiting a reply | AI Operators — conversational pattern |

| Surfacing a form to an associate | Form Builder |

| Building rich, conditional form UI | Dynamic Forms |

| Setting up your first operator | Setting Up Your First Operator |

Updated 2 months ago